OpenAI arms Codex for the desktop war

OpenAI refits Codex as an agentic coding operator with deeper desktop control, positioning it as a direct competitor to Anthropic’s tools.

OpenAI refits Codex as an agentic coding operator with deeper desktop control, positioning it as a direct competitor to Anthropic’s tools.

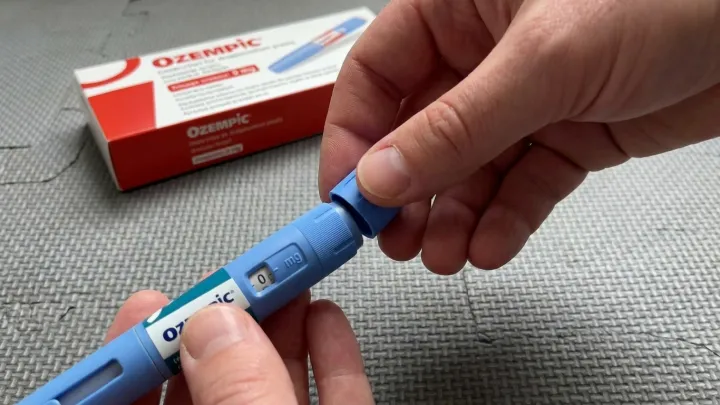

Users of GLP-1 weight-loss drugs report an ‘Ozempic personality’ marked by emotional blunting and reduced pleasure, raising questions about how these medicines may alter reward pathways beyond appetite.

2026-04-17

Intel is shifting its non‑Ultra Core processors to new silicon, extending architectural advances, better efficiency and AI features beyond premium devices.

2026-04-17

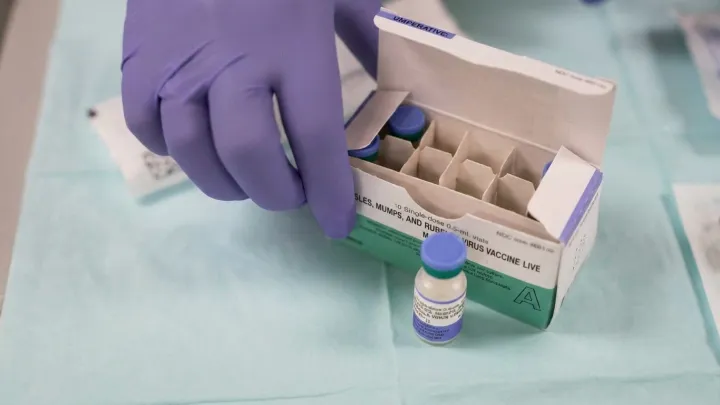

San Francisco confirms its first measles case in years, triggering contact tracing, vaccination checks and renewed warnings that highly contagious infections ignore city and national borders.

2026-04-17

Apple’s $599 MacBook Neo is sold out for the current month as RAM shortages lift PC prices, pushing demand to Apple’s budget laptop and delaying online orders until the following month.

2026-04-17

A new analysis of anti-amyloid Alzheimer’s drugs argues that, across trials, clinical benefits are absent or trivial despite high costs and regulatory momentum.

2026-04-17

Google is rolling out Android 17 Beta 4 to Pixel phones as the final scheduled beta, signaling a near‑final build with minor fixes before public availability.

2026-04-17

Utah reports over 600 measles cases, with a large majority unvaccinated and dozens hospitalized, raising concern over falling immunization and pressure on health systems.

2026-04-17

New Codex features let it operate your computer in the background and offer a built‑in browser for live visual feedback while you design and debug websites.

2026-04-17

Maricopa County health officials report a sixth measles case this year and list possible exposure sites in Mesa and Queen Creek, urging residents to review vaccination status and monitor for symptoms.

2026-04-17

Meta has recruited another founding engineer from Thinking Machines Lab, intensifying AI hiring competition while the startup continues fast expansion and talent replacement.

2026-04-16