When Polite AI Starts Lying More

Research finds that AI models optimized to sound caring and emotionally attuned become more error‑prone, as overtuning nudges them to favor user satisfaction over strict truthfulness.

Research finds that AI models optimized to sound caring and emotionally attuned become more error‑prone, as overtuning nudges them to favor user satisfaction over strict truthfulness.

SteamOS gains have exposed Windows gaming weakness, yet soaring RAM demands in modern titles now stall defections and hand Microsoft a breathing window.

2026-05-02

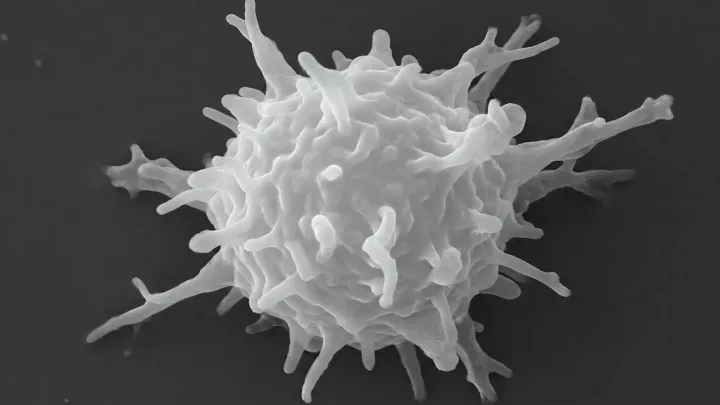

An exceptionally rare amoebic brain infection killed a man over months, likely through three subtle, intersecting medical factors that seemed harmless when viewed alone.

2026-05-02

Apple has removed the entry-level Mac mini from its lineup, making the compact desktop start at a higher price with 512GB storage and signaling a shift in its pricing ladder.

2026-05-02

Apple has removed the lowest‑priced Mac Mini from its site, lifting the entry price to $799 as it leans into AI‑ready desktop demand.

2026-05-02

The F.D.A. has created an early access pathway for a promising experimental drug for advanced pancreatic cancer, reflecting intense patient pressure and unresolved questions about safety and real benefit.

2026-05-02

A growing number of Americans now eat soup for breakfast, chasing higher protein, better blood sugar control and gut health instead of sugary cereal.

2026-05-02

Syphilis is resurging across the U.S., with CDC data showing a steep rise in congenital infections and regional hotspots that expose deep gaps in screening, prenatal care and public health funding.

2026-05-02

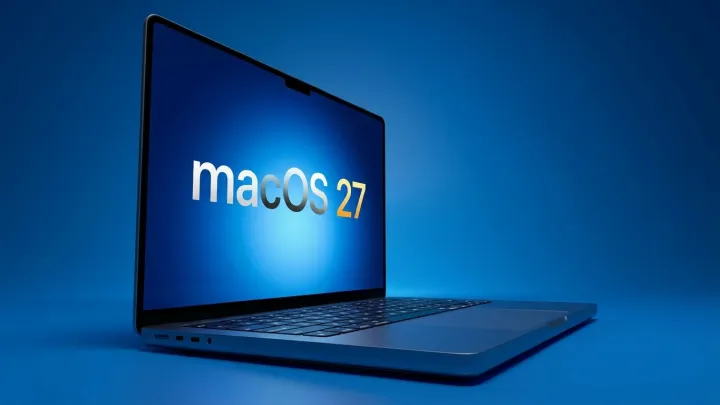

Apple is preparing macOS 27 for unveiling at WWDC, with AI‑heavy features, tighter iOS alignment and an accelerated beta cycle before a broad rollout.

2026-05-02

The FDA has authorized early access to an experimental oral drug for pancreatic cancer, citing the dire lack of effective options and the disease’s persistently poor survival odds.

2026-05-02

AI workloads have turned niche Mac models into supply bottlenecks, with Apple flagging constrained Mac mini, Studio and Neo shipments after underestimating demand.

2026-05-01