When Caring Turns Into AI Psychosis

A research-informed briefing on 11 warning signs of full-fledged AI psychosis in loved ones, from persecutory delusions to life-disrupting compulsions.

A research-informed briefing on 11 warning signs of full-fledged AI psychosis in loved ones, from persecutory delusions to life-disrupting compulsions.

Amazon’s latest Fire TV Sticks block sideloading, hardening the platform around its own Appstore and raising fresh questions over user choice, control, and antitrust risk.

2026-04-18

Large GLP-1 trials reviewed by Dr. Fitch show mood gains and no increase in suicidality, undercutting the idea of an ‘Ozempic personality’ and reframing safety concerns around these drugs.

2026-04-18

Weight loss drugs reshape appetite and reward circuits, raising concerns about blunted joy, mood shifts, and the ethics of medicating away both hunger and pleasure.

2026-04-18

Three disclosed Windows Defender flaws and public exploit code are being weaponized by hackers against unpatched organizations, exposing the fragility of endpoint defenses.

2026-04-18

Three physicians narrow the crowded allergy aisle to a focused set of over-the-counter products, highlighting oral antihistamines, steroid nasal sprays, and saline or eye drops that offer measurable, evidence-based relief.

2026-04-18

A new study reporting no association between prenatal Tylenol use and autism is under fire from RFK Jr., who labels the work garbage and urges retraction amid an already polarized vaccine and drug safety debate.

2026-04-18

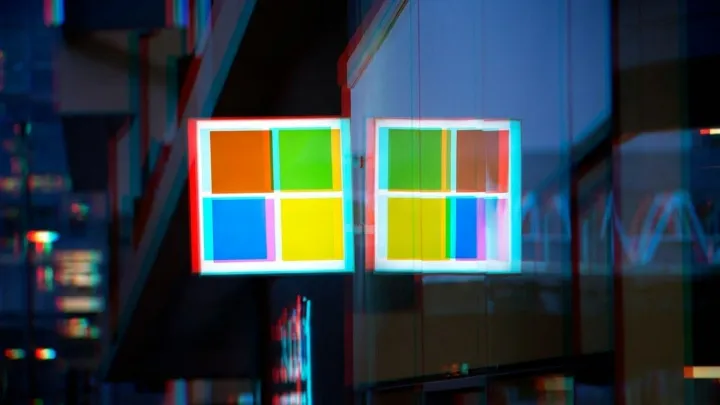

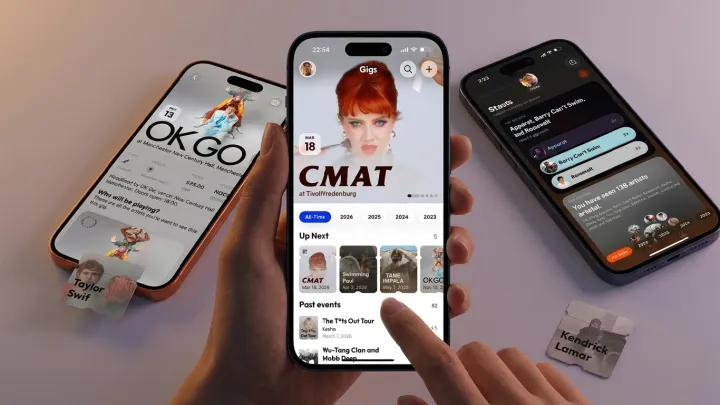

Gigs is an iPhone app that uses AI to turn old tickets, screenshots, and emails into a structured personal concert archive with stats, memories, and discovery tools.

2026-04-18

Samsung is suspending LPDDR4 output to protect margins and capacity for LPDDR5, pushing cost‑sensitive buyers into pricier, power‑efficient chips or risky secondary sourcing.

2026-04-18

Apple is expected to announce the iPhone 18 Pro at its standard late‑year keynote, with preorders shortly after and a staged retail rollout that mirrors recent iPhone cycles.

2026-04-18

Users of GLP-1 weight-loss drugs report an ‘Ozempic personality’ marked by emotional blunting and reduced pleasure, raising questions about how these medicines may alter reward pathways beyond appetite.

2026-04-17