Anthropic Sends Claude To Therapy

Anthropic subjected its Claude models to psychiatric-style evaluations, calling Mythos the most psychologically settled system yet, to probe safety, coherence, and human alignment.

Anthropic subjected its Claude models to psychiatric-style evaluations, calling Mythos the most psychologically settled system yet, to probe safety, coherence, and human alignment.

A once‑feared Victorian infection is resurging, sending up to 90 percent of patients to hospital and exposing gaps in vaccination, surveillance and public‑health capacity.

2026-04-10

America’s top cancer killer is increasingly hitting younger, healthy adults, as researchers probe gut microbes, ultra-processed diets, sedentary routines and environmental exposures for answers.

2026-04-10

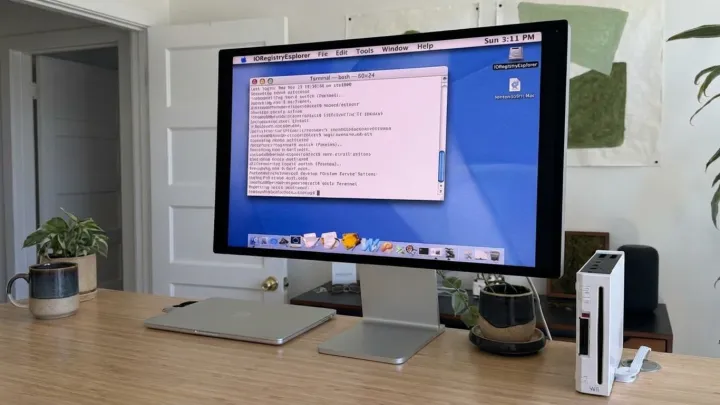

A maverick hacker has managed to run Mac OS X on a Nintendo Wii, using custom bootloaders and hardware workarounds in a tribute to the original “crazy ones” ethos.

2026-04-10

Maricopa County health officials confirmed a measles case in a Queen Creek resident and are tracing public exposure sites while urging vaccination and rapid reporting of symptoms.

2026-04-10

Health experts unpack what happens when you drink wine every day, from cardiovascular effects and cancer risk to safe alcohol limits and why dose matters more than hype.

2026-04-10

Marathon reportedly cost over $200 million to build. Player numbers have dropped sharply, yet sources say Bungie is not preparing an abrupt, Concord-style shutdown.

2026-04-10

Apple releases macOS Tahoe 26.4.1 as a minor bug fix update, available through System Settings, targeting stability and security issues for Mac users.

2026-04-10

An internal CDC study found COVID shots cut urgent care visits and hospitalizations by about half in healthy adults, but a Trump health official blocked its release.

2026-04-10

Razer unveils the Hammerhead V3 HyperSpeed gaming earbuds, focusing on low‑latency wireless audio, fast multipoint switching, and cross‑platform support for console, PC, and mobile play.

2026-04-10

New analysis of nearly 28,000 obesity patients suggests that genetic variants influencing appetite and drug metabolism may shape who responds to weight-loss injections.

2026-04-09